Survivability Engineering, Part 6: Surviving Reality

Mar 2026

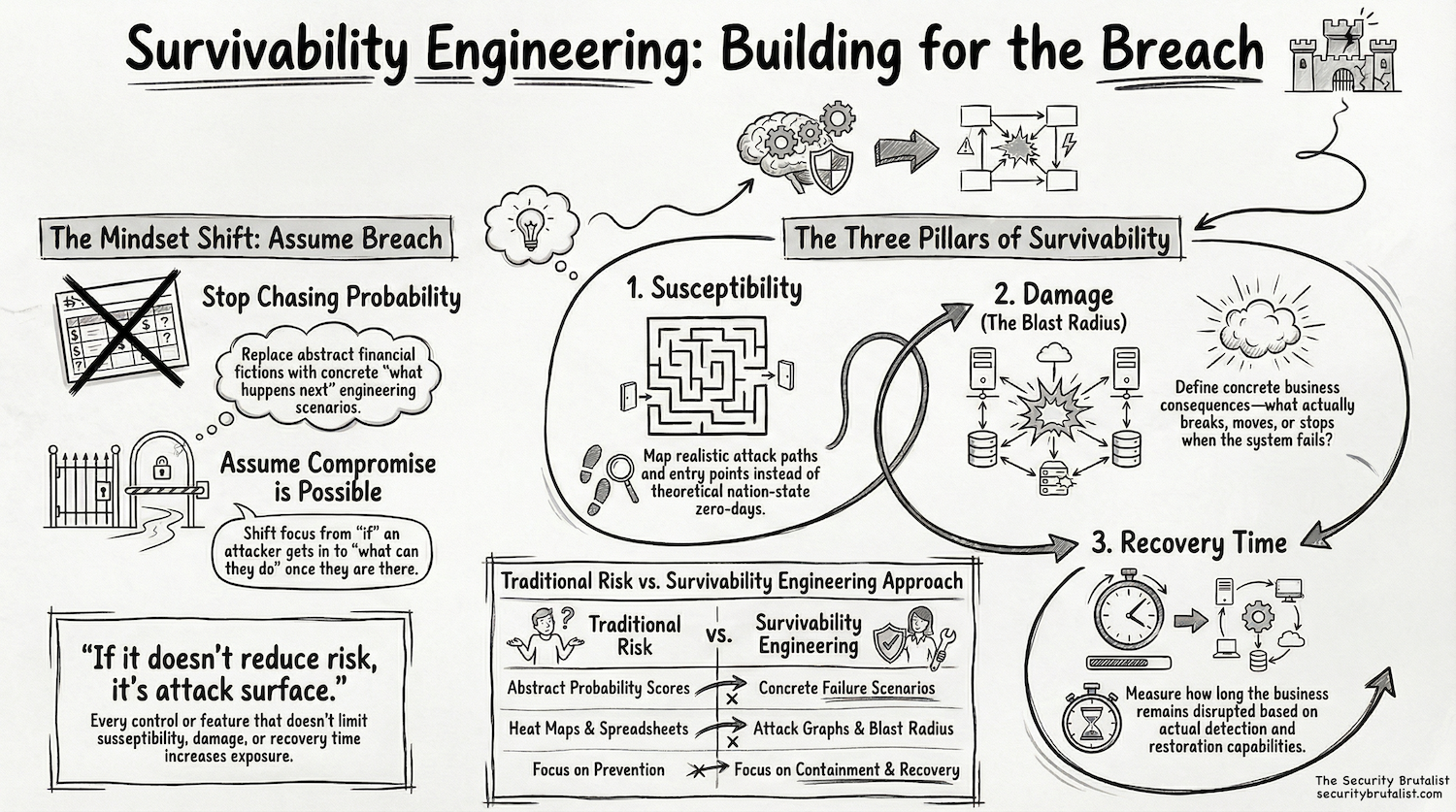

Survivability engineering is the discipline of assuming real systems will get compromised and working within that assumption to understand what breaks, how badly it breaks, and how quickly it can be restored.

Security starts degrading the moment a system goes live. Entropy builds through access, integrations, exceptions, and time. Permissions expand because people need to get work done. Systems connect because the business demands it. Controls drift because nobody maintains perfect alignment between design and operation. What you believe about a system and what is actually true separate slowly, then all at once. That separation creates attack paths.

You cannot rely on abstract models to carry you through that condition. Systems do not behave cleanly under stress; attackers do not follow assumptions; people do not act the way policies expect. Survivability engineering keeps modeling grounded in reality and moves the focus to something that holds under pressure. Assume compromise, then ask what happens next.

Anchor on consequence. Why does this system exist and what stops when it fails. Survivability engineering centers on outcomes, not components. If a system goes down and nothing meaningful breaks, the risk stays low. If it halts revenue, operations, or trust, the risk becomes obvious. That clarity sets the boundary for everything that follows.

Then move to susceptibility. How does an attacker realistically reach this system. What identities can touch it. What data flows into it. What systems influence it. Where do trust boundaries exist in theory but not in practice. Trace the path step by step and show how something small turns into something significant.

Modern systems increase susceptibility faster than most teams recognize. AI Agents, automation, and integrations act with speed and scope that traditional controls never accounted for. They take input, interpret it, and act on it. Influence becomes a primary attack vector. The question shifts toward how a system can be pushed to behave in a way that creates damage.

After susceptibility, force the question most programs avoid. Assume the attacker succeeded. What damage follows, not categories or labels, but what actually happens. Can they move money. Can they access sensitive data. Can they create persistence. Can they disrupt operations. You need specificity because vague impact hides real risk.

Then define recovery time. How long does the system stay degraded. How quickly does anyone notice. How quickly can access be revoked, systems isolated, and operations restored. This is where most assumptions collapse. Backups exist but nobody tested restoration. Logs exist but nobody watches them in time to matter. Access controls exist but exceptions override them. If recovery depends on things that have never been exercised under stress through chaos and adversarial simulation, it remains theoretical.

These three elements form the core model. Susceptibility, damage, and recovery time. The model works because it stays tied to outcomes and gives both engineers and leadership a shared way to reason about risk.

Entropy pushes against all three. Susceptibility grows as access and integrations expand. Damage increases as systems become more interconnected and privileges accumulate. Recovery time stretches as complexity obscures ownership and response paths. If nobody actively pushes back, security continues to degrade.

If it doesn't reduce risk, it's attack surface.

Every control, every integration, every feature must justify itself against susceptibility, damage, or recovery time. If it does not make compromise harder, limit the impact, or speed up restoration, it increases exposure. There is no neutral. Complexity without purpose accelerates entropy.

Security Brutalism aligns directly with this model. It removes decoration and forces systems to show how they behave under stress. It exposes real paths, real dependencies, and real failure modes. Systems are designed so compromise does not cascade, damage stays contained, and recovery remains achievable.

Strong security is security that survives contact with reality. Credentials get stolen. Systems get accessed. Controls get bypassed. The question is what happens next. Does the attacker move freely or do they hit resistance. Does the system fail completely or in contained ways. Does recovery depend on guesswork or on practiced execution.

Survivability engineering returns security to fundamentals. Start with a real system. Assume compromise. Trace the path. Define the damage. Measure the recovery. Test the assumptions. Remove what does not reduce risk. Repeat as the system changes. That loop holds because it reflects how systems actually behave over time.

Build for the breach. Build for recovery. Everything else is attack surface.

Originally posted on The Security Brutalist blog.